This Horizon2020 FET-HPC ExaNeSt project develops and prototypes solutions for some of the crucial problems on the way towards production of Exascale-level Supercomputers:

WHAT we do...

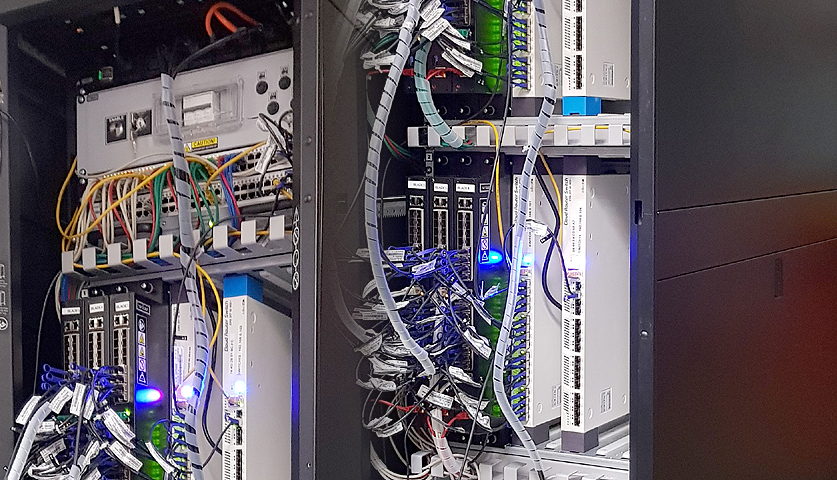

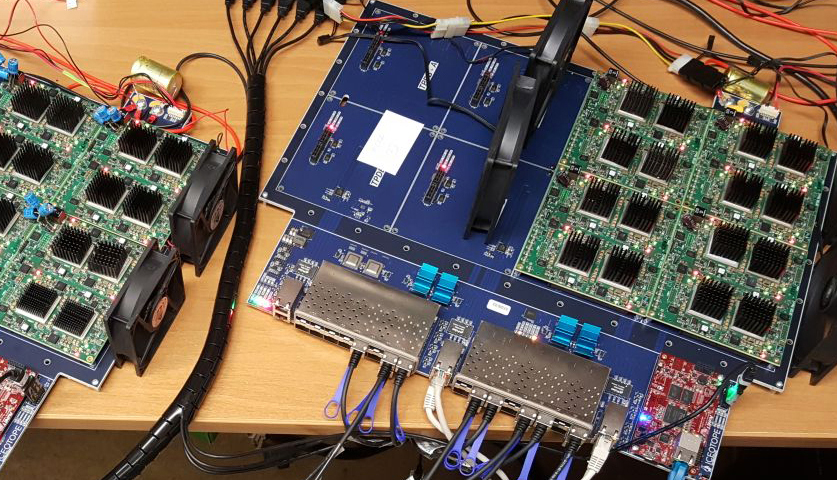

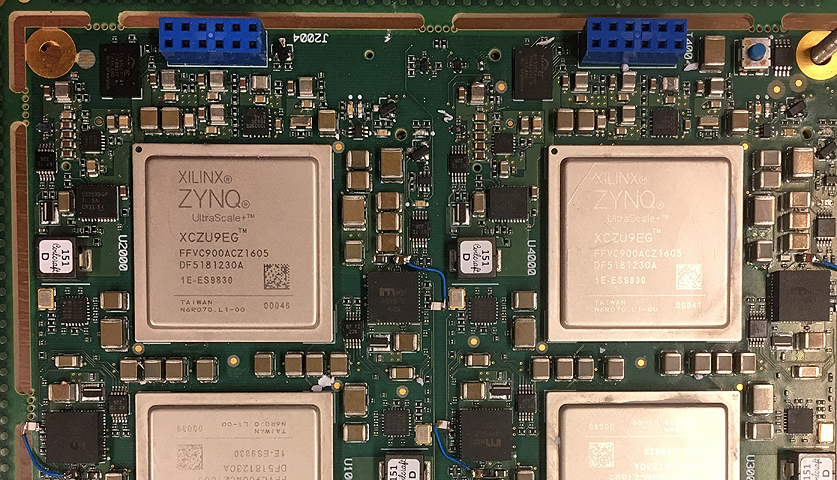

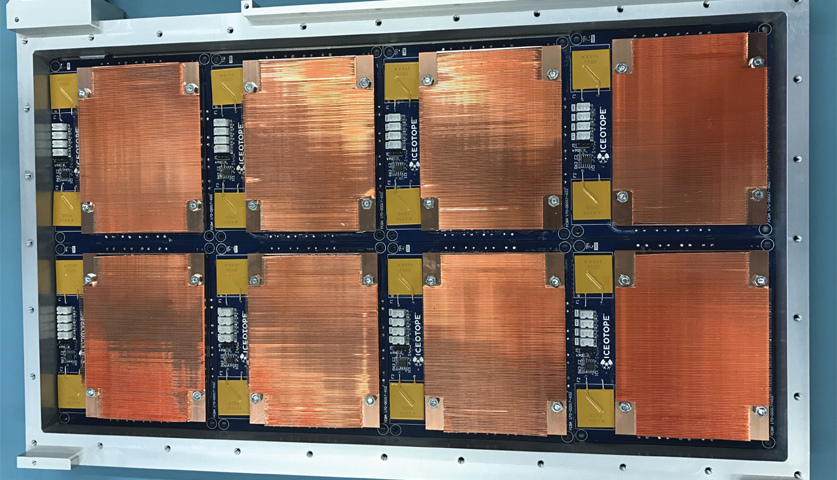

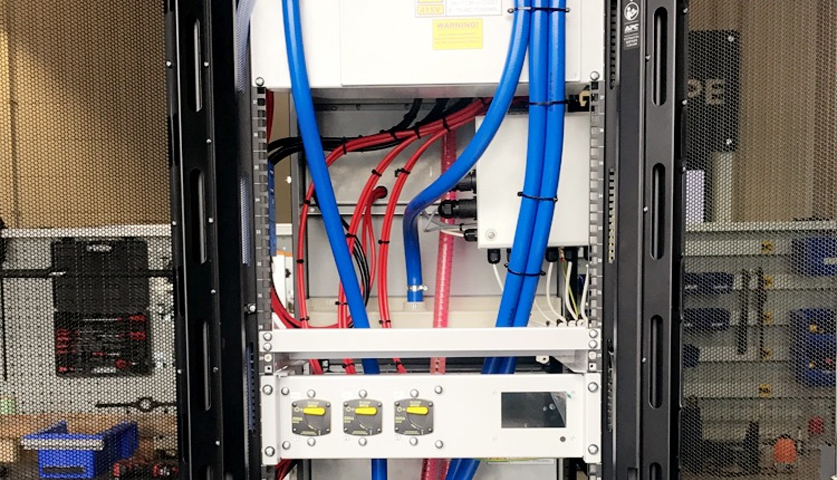

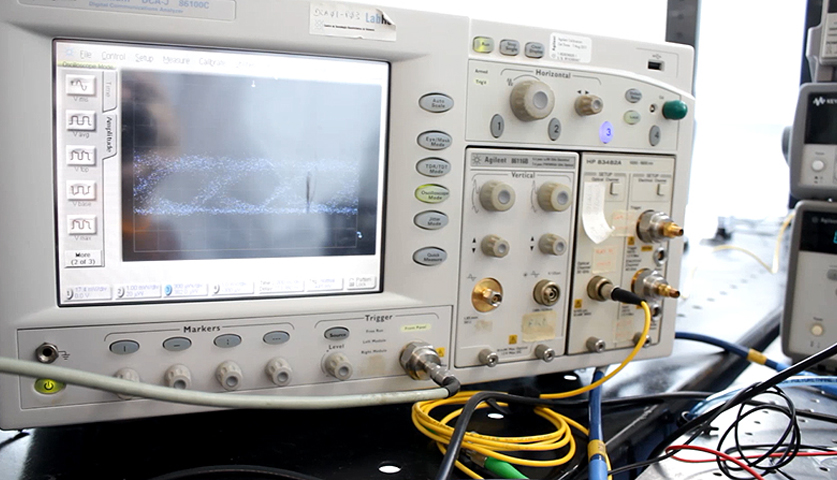

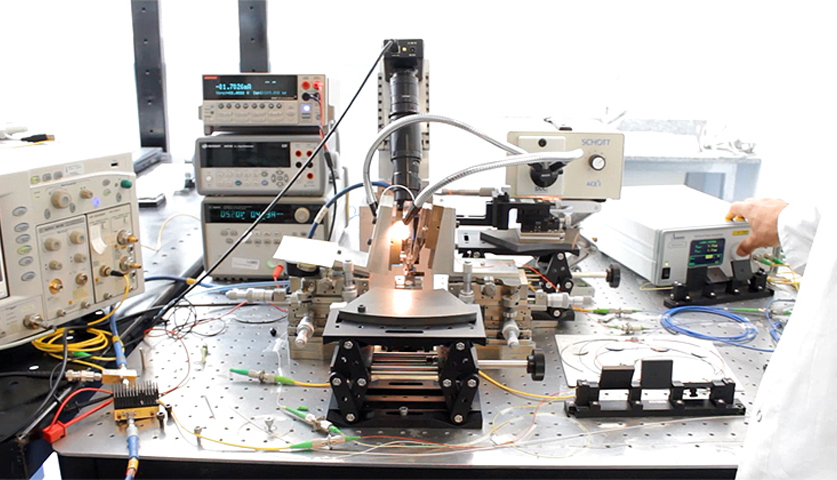

ExaNeSt develops and prototypes solutions for Interconnection Networks, Storage, and Cooling, as these have to evolve in order for the production of exascale-level supercomputers to become feasible. We tune real HPC Applications, and we use them to evaluate our solutions. Click for more information...

WHY we do it...

HPC is a precious tool for all of modern technology, science, and society. For the next generation of HPC systems, we need millions of low-power-consumption computing cores, tightly interconnected and packaged together and appropriately cooled, and with a new storage architecture. Click to see why...

HOW we do it...

We use the UNIMEM Global Address Space and zero-copy send/receive operations, and we tune our applications for such architectures. We develop efficient networking with all its frequent-path functions implemented in hardware, including advanced congestion management for full utilization at low latency; we distribute the file storage on top of that. Click to see more...

WHO we are...

The ExaNeSt Consortium combines industrial and academic research expertise, especially in the areas of system cooling and packaging, storage, interconnects, and the HPC applications that drive all of the above. Click to see who we are...

WHO we collaborate with...

The ExaNeSt consortium collaborates with two other contemporary and one subsequent H2020 projects: ExaNoDe (www.exanode.eu), focused on compute-node and memory concerns), ECOSCALE (www.ecoscale.eu), focused on heterogeneous architectures and specifically the efficient use of FPGA-based accelerators, and EuroEXA (www.euroexa.eu), focused to provide a petascale-level prototype by innovating both on technology and application/system software pillars. Click to see more...

Latest News:

- ExaNeSt Project Successfully Builds Prototype for Exascale, 16 September 2019

- ExaNeSt Milestone-4 achieved: Six-Blade HPC Testbed up and running, 16 April 2019

- Visit of Mr. Jean-Eric Paquet, EU Director General of Research and Innovation, to FORTH, 28 March 2019

- THE NEXT PLATFORM, 22 January 2019

HiPEAC: Shifting Focal Points in European HPC Research - Integration of the QFDB compute node on a mezzanine board, 20 September 2018

- Release of HPC mini-applications by the ExaNoDe project, 09 February 2018

- ExascaleHPC, 23 January 2018

Workshop on the main results from the three FET-HPC projects, ExaNoDe, ExaNeSt, and EcoScale

Project Info:

Call: H2020-FETHPC-1-2014 – (a) core technologies and architectures

Project Number, CORDIS Info: 671553

Duration: 01 Dec. 2015 – 31 May. 2019 (42 months).

Budget: Cost: 8.44 M€, EU contribution: 8.44 M€